The only knowledge infrastructure engineered for scale.

Deployed in your cloud.

Helping engineers adopt AI confidently

Reliability at scale

Enterprises need reliable AI. Reliability comes from what AI can access and how it reasons.

50,000+

Relationships mapped per project

5+

Retrieval paths per query

50+

Cloud resource types indexed alongside code

100%

Deterministic structural parsing

Deterministic structural parsing

Code and infrastructure are parsed mathematically into components, bypassing unreliable LLM inference for understanding system architecture.

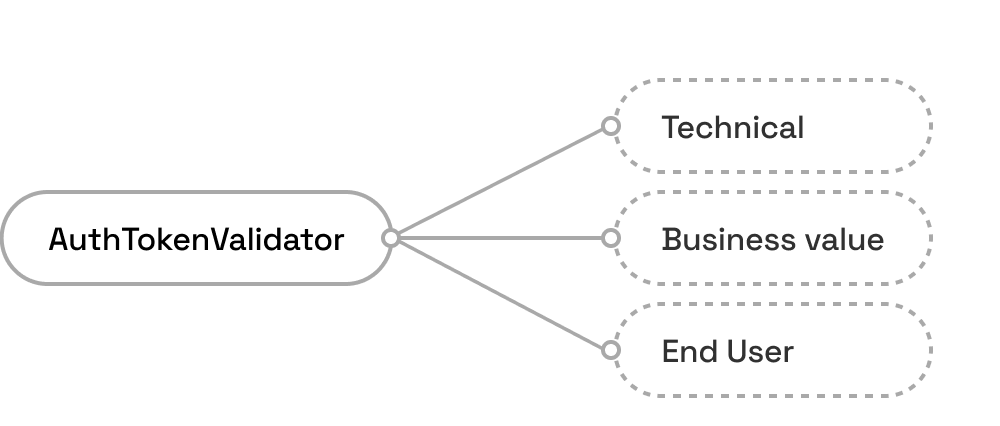

Pre-mapped relationships

Instead of forcing an LLM to guess how services interact, relationships are pre-mapped into the database before the AI ever sees them.

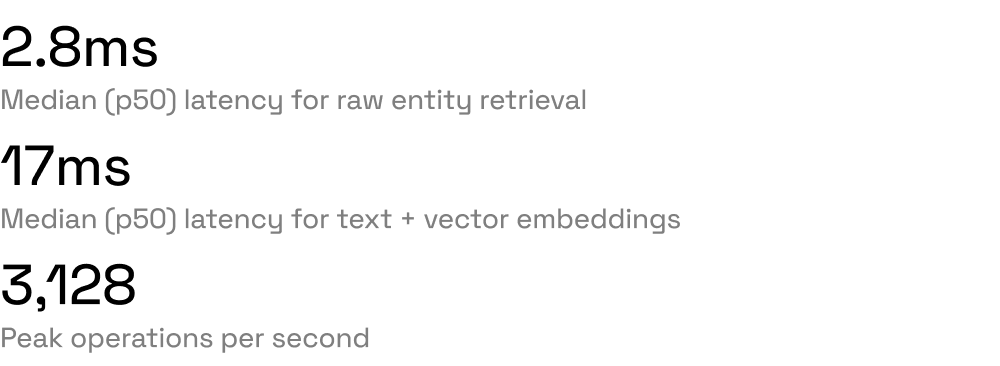

High-fidelity retrieval

Maps over 50,000 relationships per project and utilizes 5+ simultaneous retrieval paths per query to ensure comprehensive context.

Architectural efficiency

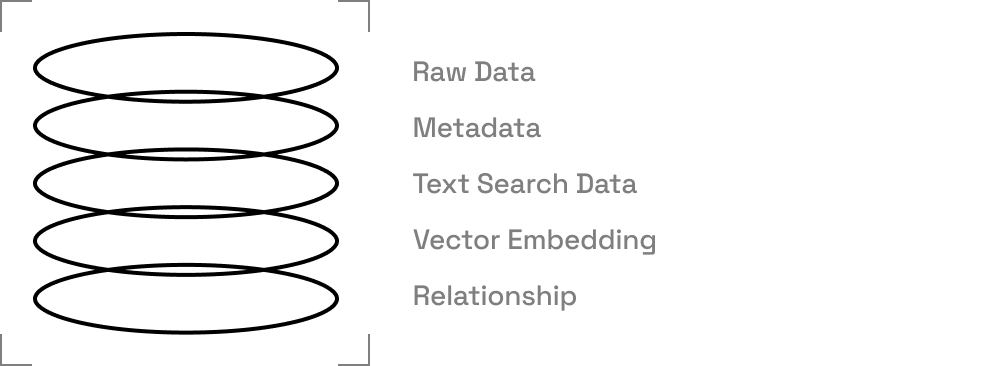

Purpose built context database. Engineered for millisecond retrieval.

Seamless adoption

For every team.

Zero-disruption adoption.

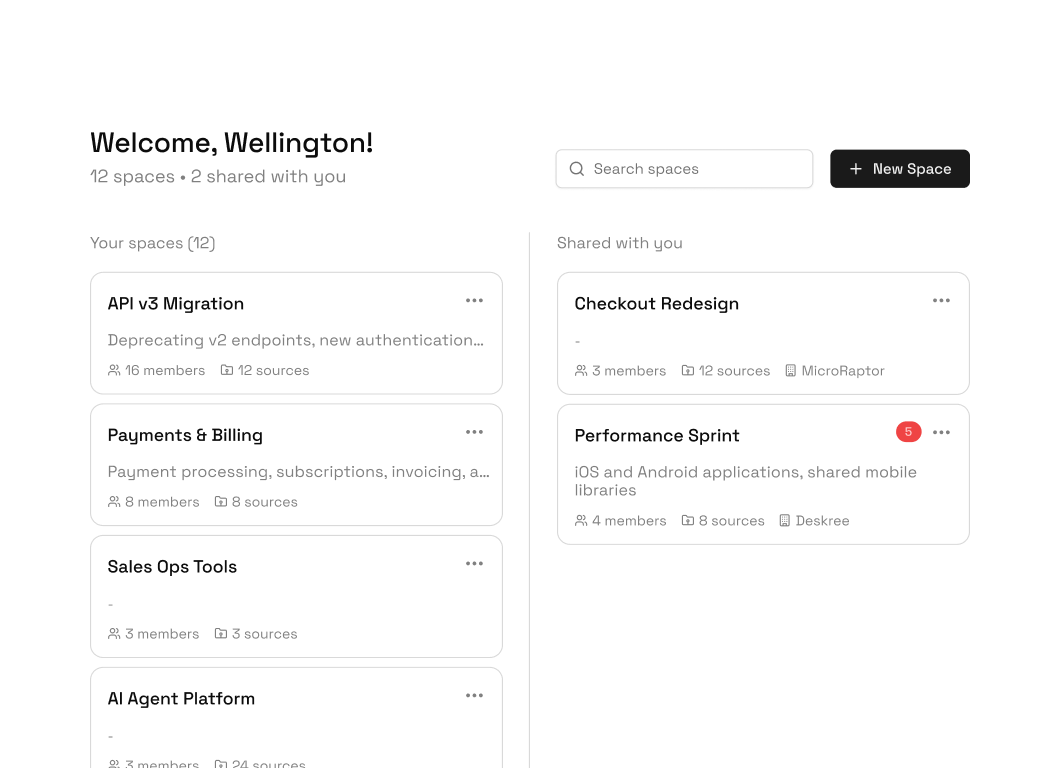

Shared context across teams, scoped access

Create spaces scoped to any project or team. Invite members with RBAC that mirrors your organizational permissions. Save sessions, hand off analysis, resume from any tool or machine.

Seamless stack integration

Connect your sources then access and integrate. Everything through one knowledge system.

Deployment & control

Deployed in your cloud. Read-only by design. Every access control auditable.

BYOC deployment

Single Go binary deployed in your Kubernetes cluster. No external dependencies.

Bring your own model

Connect your preferred LLM provider. No vendor lock-in on the reasoning layer. Your model, your terms.

Pluggable backends

Swap the graph store, search engine, and object storage for yours.

Read-only by design

Tetrix never executes code, modifies infrastructure, or trains on your data. Zero execution risk.

Granular access control

Row-level security and a two-layer Role-Based Access Control (RBAC) for both data sources and AI agents.

Comprehensive auditability

Every single access and action taken by the AI or users can be fully audited.