Build against your knowledge infrastructure.

Tetrix exposes the full knowledge layer through standard protocols. Point any OpenAI-compatible client at the completions endpoint. Every consumer, human or automated, queries the same structured knowledge.

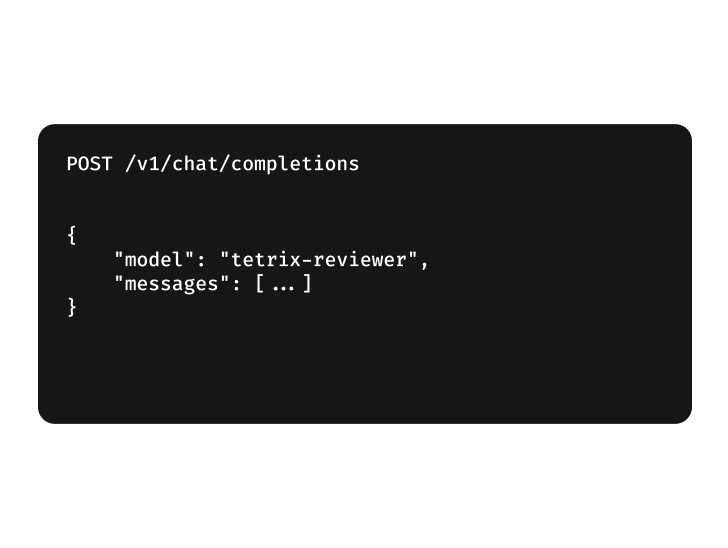

OpenAI-compatible

Point any OpenAI-compatible client at Tetrix.

Tetrix exposes the standard endpoint. Any client that speaks the OpenAI API connects by pointing OPENAI_BASE_URL at Tetrix with the same request and streaming response shape. What comes back is reasoning from your indexed system, cited to the source.

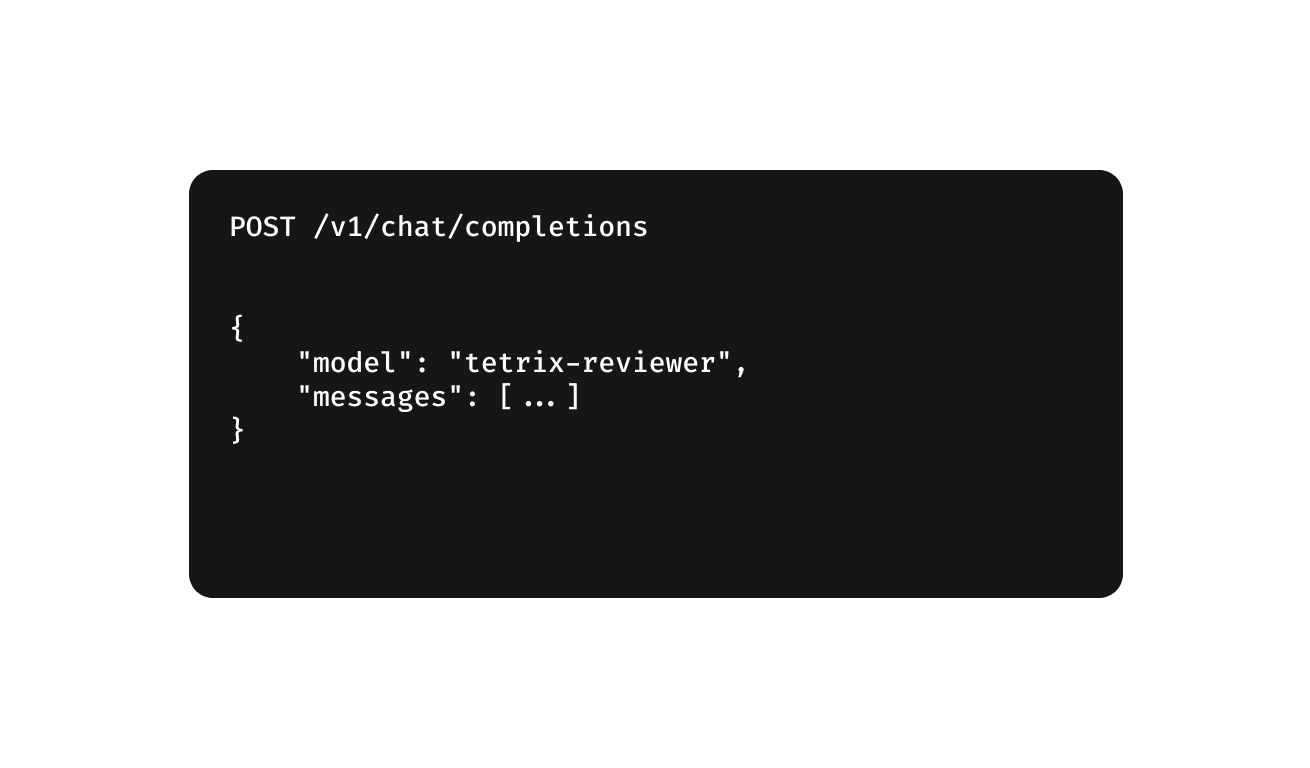

Call agents from code

The same agents your engineers use. Reachable from any pipeline.

Every specialized agent is reachable through the completions endpoint. Target the reviewer to run code review in CI, the knowledge agent for internal portals, or chain agents into custom workflows.

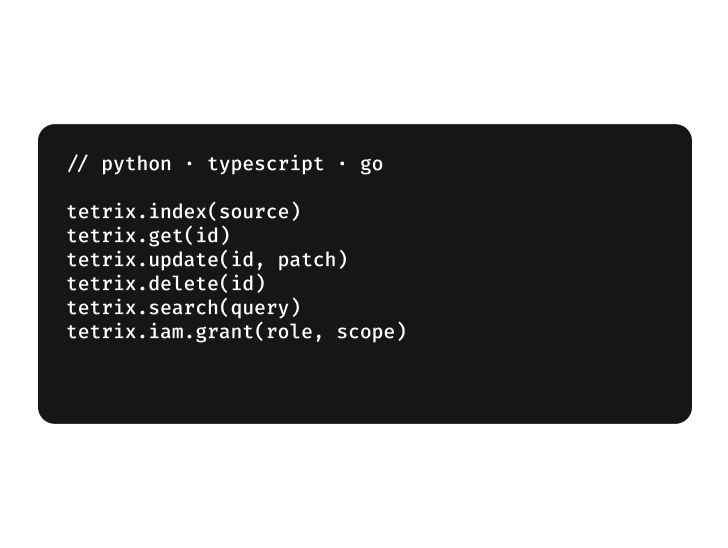

Programmatic control

Full operations over the knowledge layer.

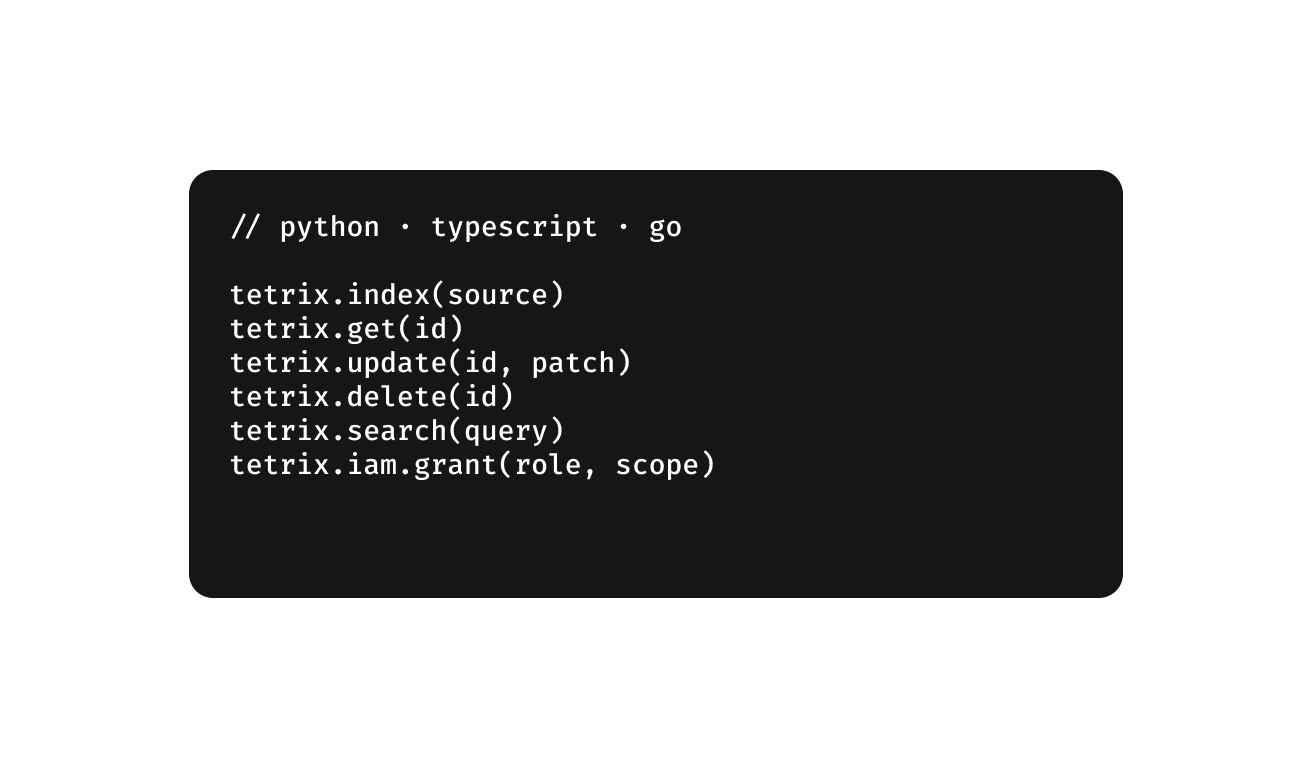

Native SDKs in Python, TypeScript, and Go speak the same binary protocol and expose the full operation set: INDEX, GET, UPDATE, DELETE, SEARCH, and IAM. Everything the platform reads and writes is reachable from your own code. The knowledge ecosystem is something you build on, not just query.

Ingestion flexibility

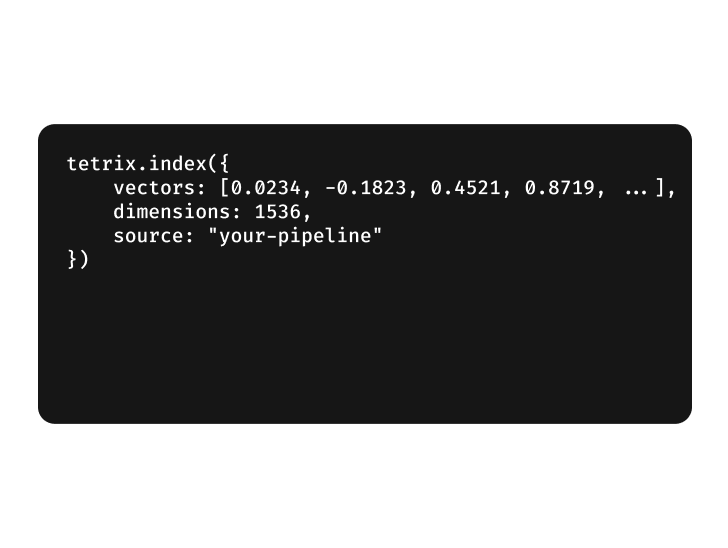

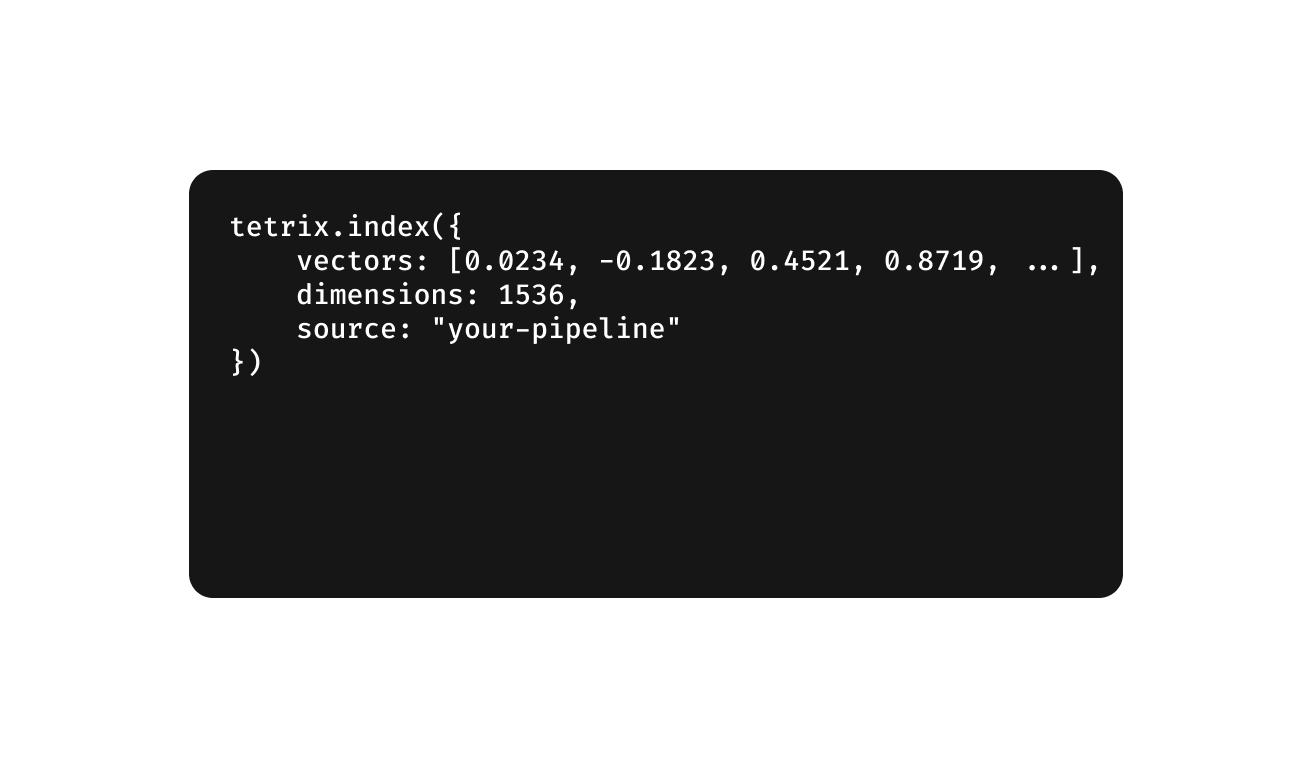

Already have an embedding pipeline? Send vectors directly.

The INDEX operation accepts pre-computed vectors, so your existing pipeline becomes the ingestion layer. Whatever model, whatever dimensions.

Enterprise-ready by default

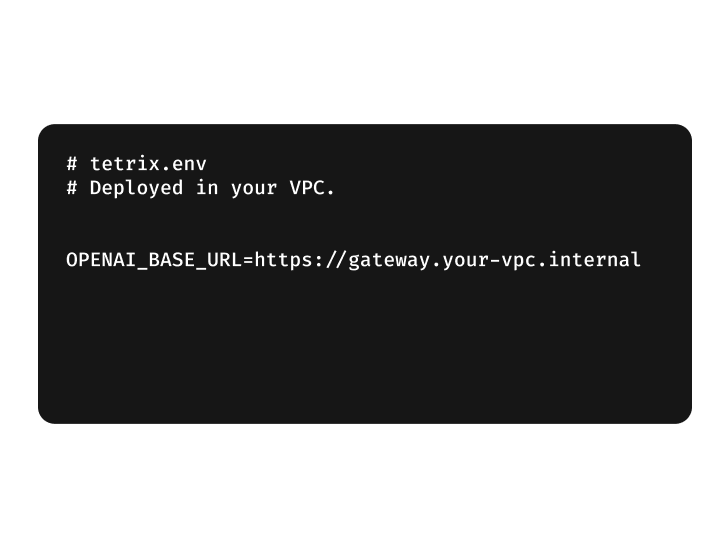

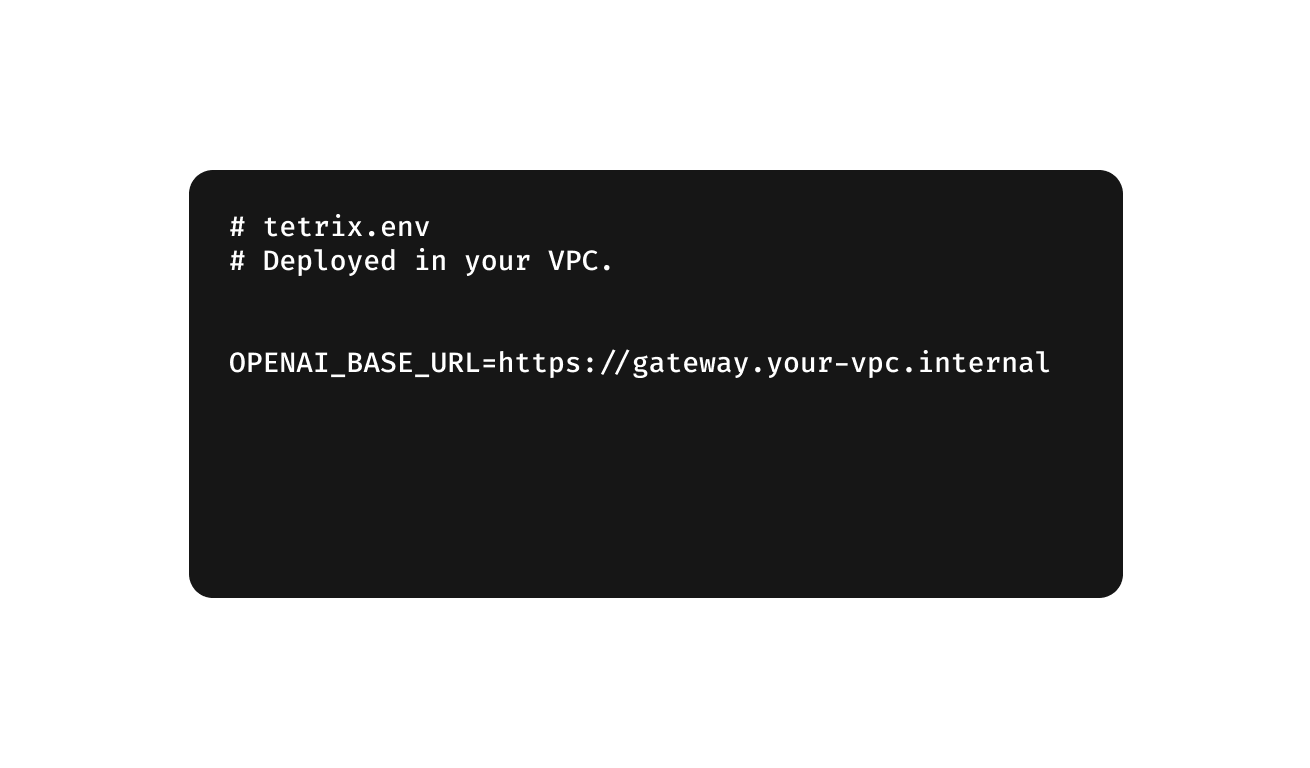

Route all traffic through your own API gateway with one environment variable.

Row-level security filters every query by the caller's permissions before any data returns. The platform deploys in your cloud, so every call resolves inside your perimeter.