Deep research with your prebuilt knowledge.

With Tetrix your LLM works against a knowledge system that already knows the shape of your code, what depends on what, and where everything lives. It is not discovering your stack on every query.

Purpose-built for depth

For the research that crosses repositories, documents, and standards at once.

Deep Research is for investigations, not inline completions. Architecture reviews that span six repos. Migration plans that trace data through fifteen services. Root-cause analysis that needs to correlate a deploy, a config change, and a code path. Deep Research in Tetrix runs the queries that no single file can answer.

See what you can askSpecialized agents

Agents that know their domain and take real actions.

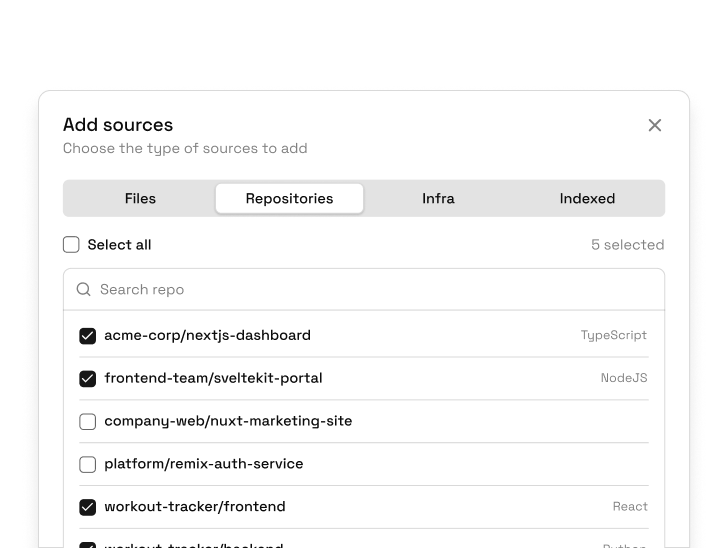

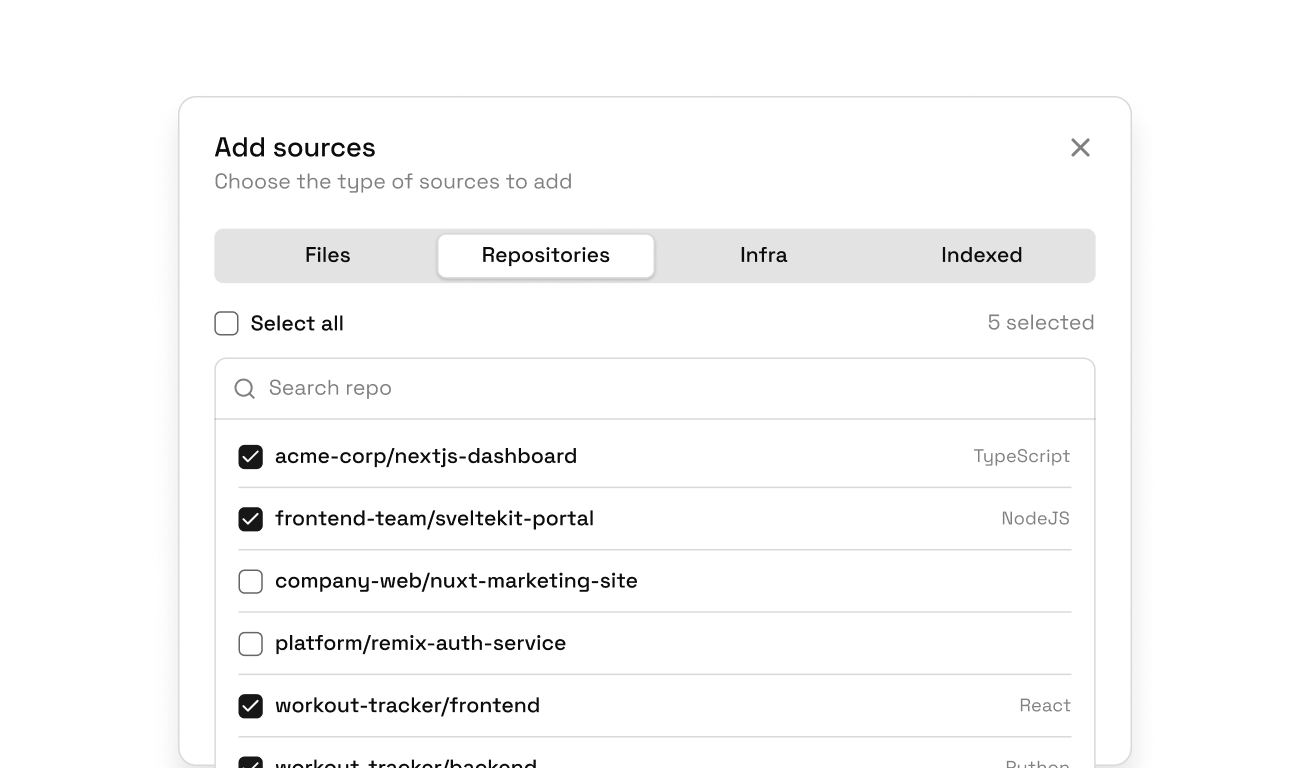

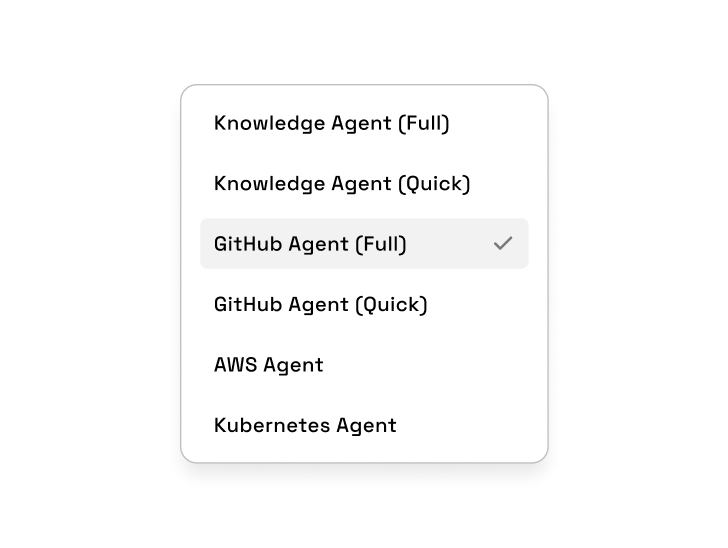

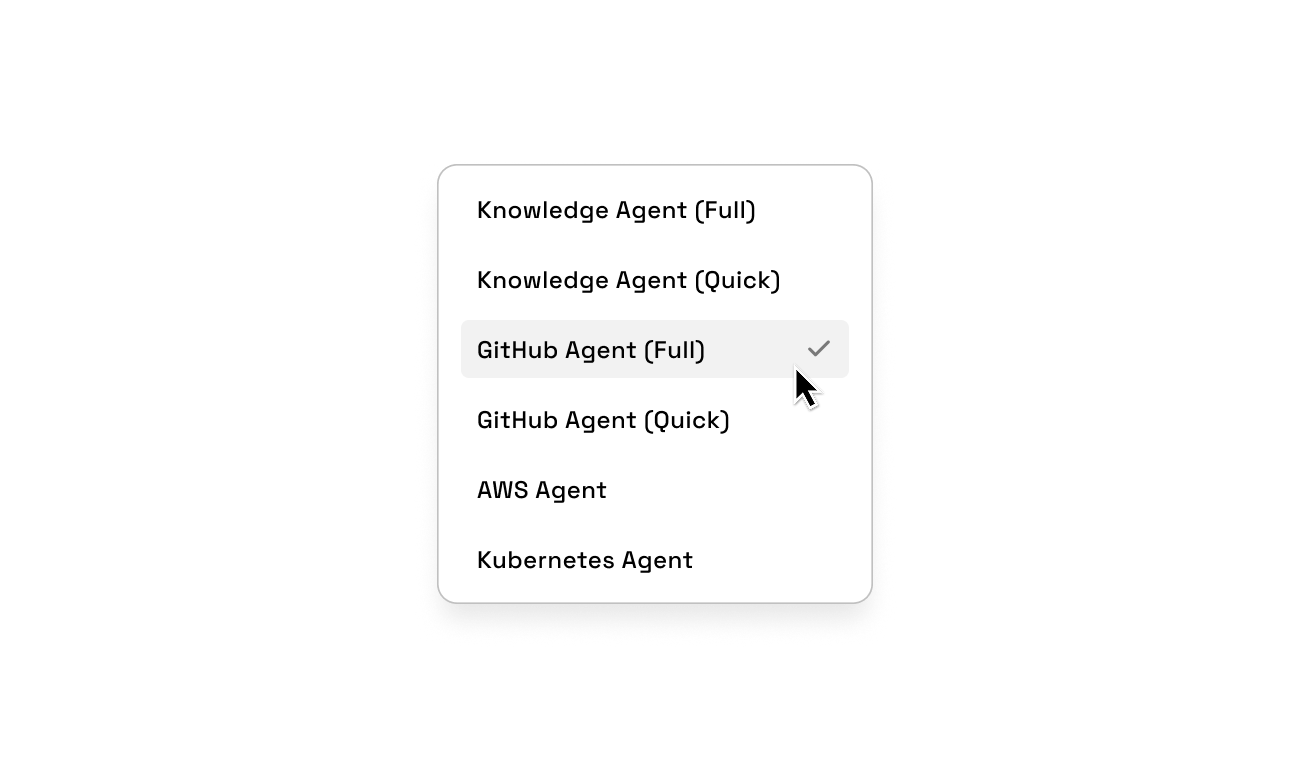

Pick the agent that fits the question. The Knowledge Agent queries your indexed graph directly, with no external calls. The GitHub, GitLab, and Bitbucket Agents read code, analyze PRs, and open issues without leaving the session. The AWS Agent pulls live resource configurations. Each agent is scoped to its domain and authorized to act in it.

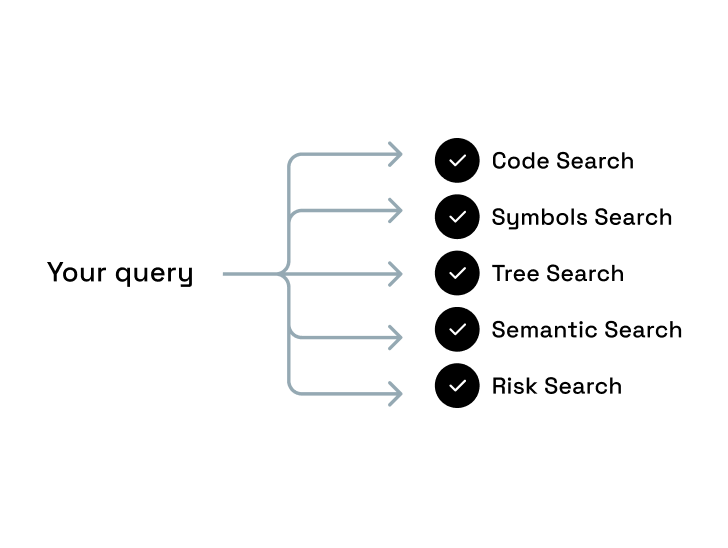

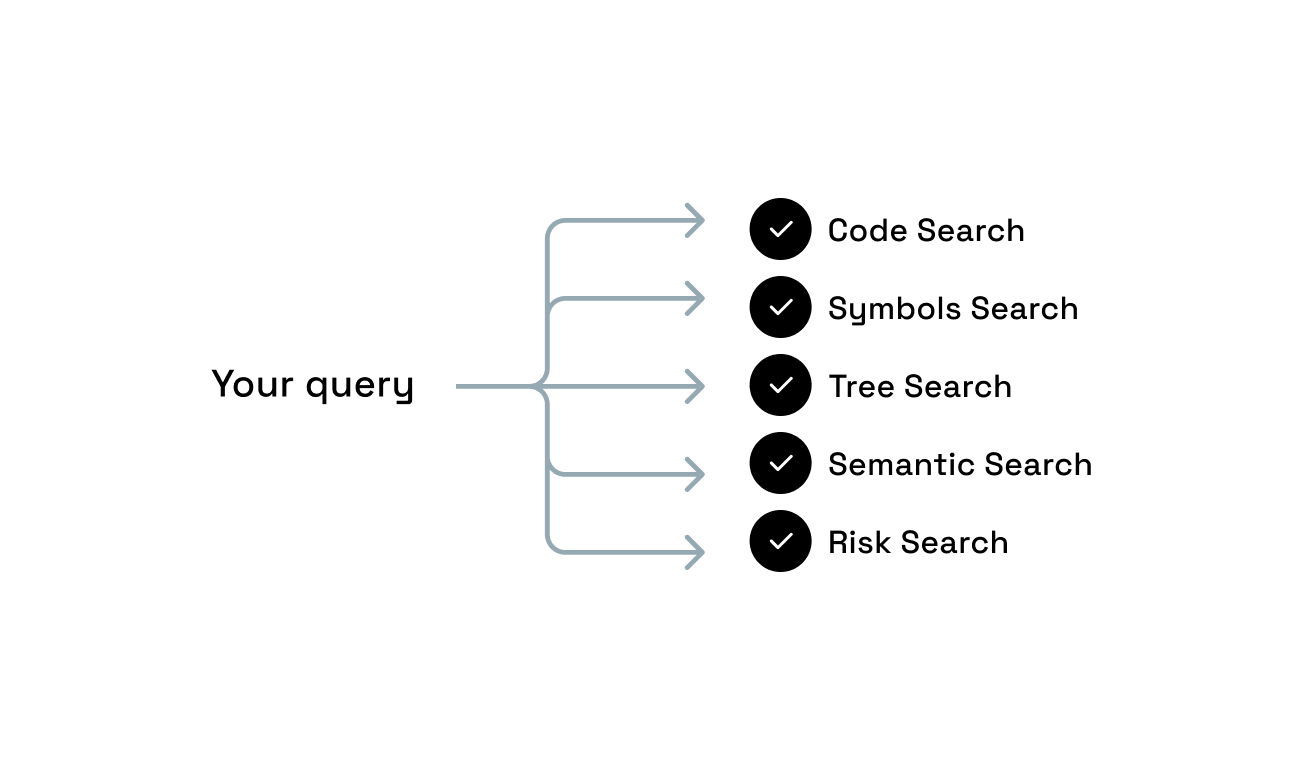

Deterministic retrieval

Every question resolves against structure that already exists.

Most AI tools discover your system at query time and that is where accuracy breaks. Tetrix works differently. The agent runs five queries in parallel against a structure that already exists: code search, symbol traversal, dependency trees, semantic matching, and blast radius analysis. Nothing is being figured out in the moment.

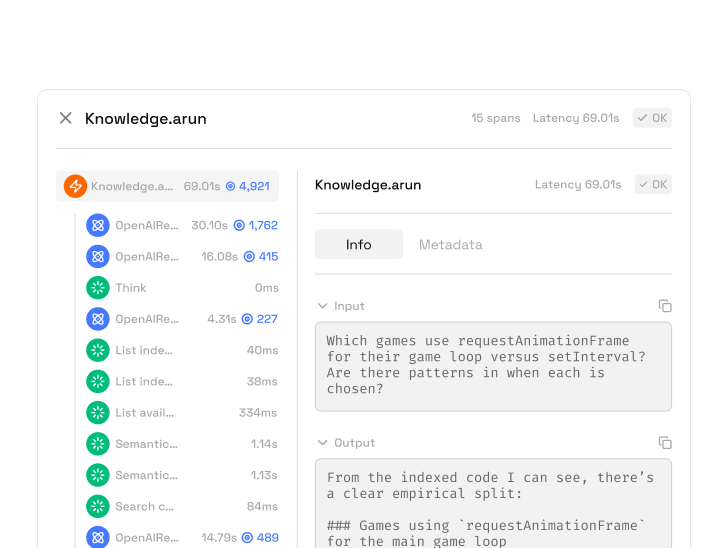

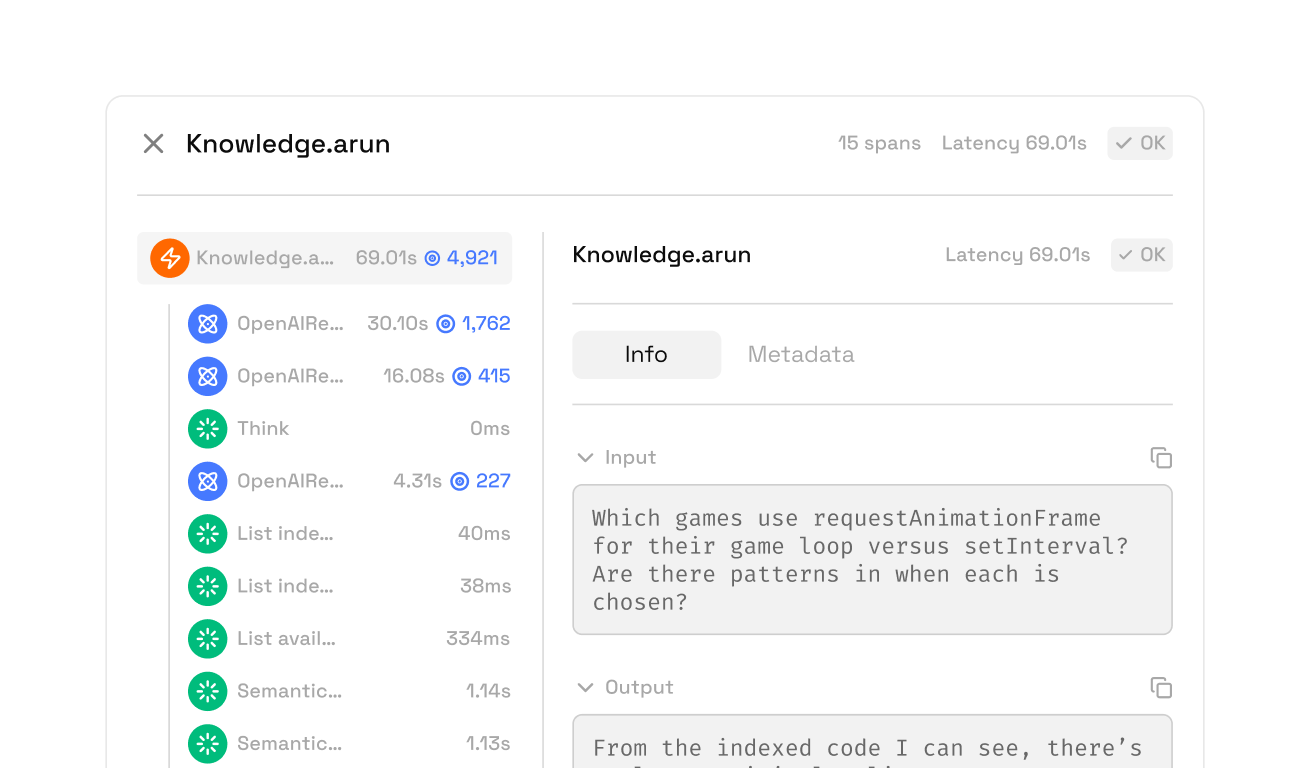

See how the graph is builtTransparent reasoning

Every reasoning step the agent took. On the record.

Open the trace of any response and see the complete reasoning path: which tools were called, in what order, what they returned, and how long each step took. A complete record of what the agent did, not just a summary of what it "thought."

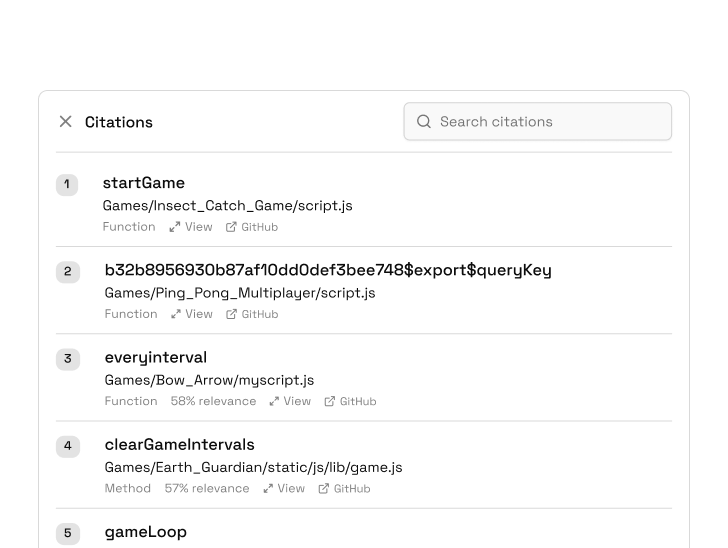

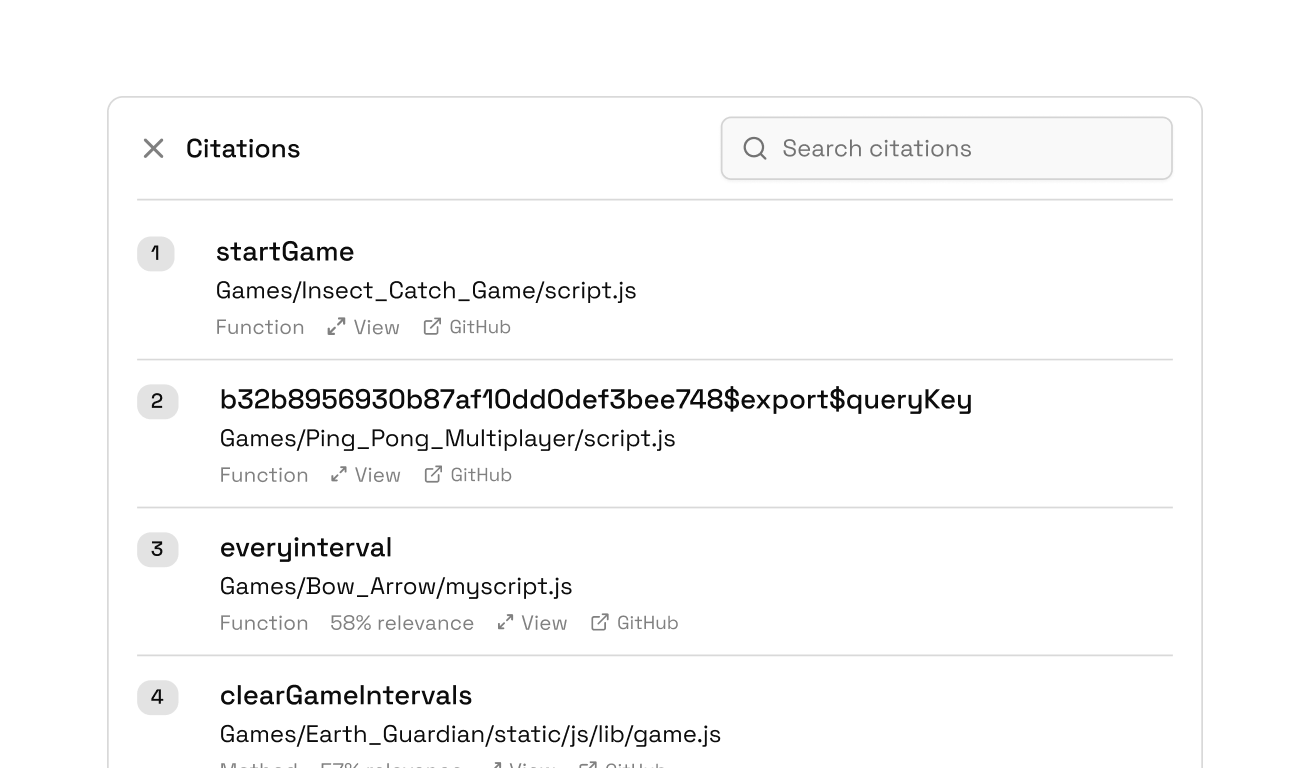

See how ingestion worksSourced answers

Every claim points to the structure it came from.

Every response shows what informed it, the exact indexed code or document, source counts. Open the citation to see the original. Nothing is paraphrased from the model's memory. The response is grounded in the structured knowledge you can inspect.